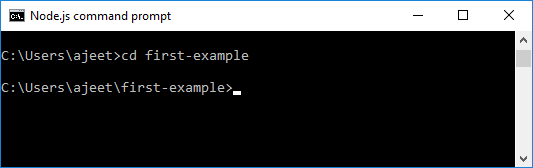

This allows the dev to use this startCursor to query for another set of news items. We got the last record in the edges and referenced the node object. In the map() callback function, each news item is converted to a node, and the cursor is set using the news item id.įurther down, we set the startCursor. The result is mapped using the Array#map method, each news item in the result is converted to an edge. We used the Array#slice to collect the records starting from the afterCursor index, and the amount/number of records to collect using the first argument. We used the afterCursor argument to get the index of the cursor in the database. Let hasNextPage = data.length > afterIndex + first Let nodeIndex = data.findIndex(datum => datum.id = afterCursor)Ĭonst slicedData = data.slice(afterIndex, afterIndex + first) Let's see a complete example of cursor-based pagination in an Express.js GraphQL server.įirst, we scaffold an Express.js project: Each edge holds metadata about each object in the returned paginated result. It connects two nodes, representing some kind of relationship between the two nodes. Edges: This object contains the info about the parent and the child it contains.This is mostly used in cursor-based pagination because it gives us extra info we use to perform the next query. Connection: This object contains metadata about the paginated field.This concept is used by Facebook, Twitter, and GitHub for fetching the paginated records.Ĭonnection is an object that contains Edges, and Nodes. This uses a concept called Connection.Ĭonnection is a concept that started with RelayJS and is used for GraphQL. This information is computed and returned in the query result. Next cursor: The cursor to use in the next query.Has next page: This indicates whether we have exhausted the pages or there are more pages.Total count: This tells us the total count of data in the database.We have to return more info so we know more about the remaining data so we can know the next query to perform. We can use other uniques fields in our records provided the fields are globally unique. Note: The method of usign IDs as cursor is one way of implementing cursor-based pagination but there are other ways beyond the scope of the article. The last record in the returned chunk becomes the next cursor. It uses a record id in the database to locate its starting point then, slice off the required chunk. This is basically how cursor-based pagination works. It gets the starting point from the dataset using the cursor then, it iterates using the limit and populates the result. Initially to fetch a page the pagination details will be: We can set the offset to always skip records and start from the next record after the last skipped record. Let's say in our 1000 blog posts, that we want a page to have 10 blog posts. Limit holds the number of data a page will contain, offset is the number of records to skip. That's where we use the offset and limit. We can define how much data can be contained on a page. Now, in pagination, data are broken into pages. This way we optimize our app performance.ĭoing this in GraphQL is pretty easy. For example, we can fetch 10 blog posts first, then on scrolling of the list view, we fetch the next 10 blog posts and append them to the UI. The only way to mitigate against this is to paginate our queries. In this case, we might experience a slow response from our browser and the UI will be laggy to respond to our actions. Mapping through the fetched 1000 blog posts and rendering them on the UI also will eat up the browser memory and performance. If you have 1000 blog posts in your database, the blog list page will fetch the 1000 blog posts and display them.įetching up to 1000 blog posts in one swoop will eat up rendering time. This helps in optimizing our app's performance because trying to get all the data will result in a slow load time of our app and also a slow rendering of the results in the UI.įor example, let's say you have a blog website, and on the blog page, you display blog posts in a list view. Pagination means getting a part of data in a large dataset, and continuously get parts of it till the whole dataset has been received. Querying a list of data in discrete parts

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed